Using New Google Publisher Data To Improve SEO

Get helpful updates in your inbox

Using New Google Publisher Data To Improve SEO

Recently, Google rolled out a major update to Google Search Console. This update has equipped publishers with a wealth of new data and information; as well as an enhanced new user interface. This new Google publisher data offers new opportunities for SEO and the development of new popular content.

Since this info just became globally available, there has never been a better time to attack the strategies I’ve listed below. The release of this unprecedented search data to all publishers means that soon everyone will be finding ways to optimize things like organic CTR among top landing pages.

There will likely be software tools built, blogs written, and “experts” reaching out with a whole host of new “services” (sarcastically in quotes for both) related to the new data Google has made available. This data can directly help you improve modern SEO on your website, but the faster you act, the more likely you are to benefit.

Below, I’ll list some of the basic ways you can use this new Google data for publishers.

What new Google Search Console data helps with SEO?

This is probably the first question that publishers began asking themselves when Google released the newest Search Console beta edition in December of 2017. Until it’s global availability — announced in January 2018 — publishers were left waiting for some of the new data — promised inside of Search Console (including a longer record of data than previously allowed).

There are really 2 major changes that will benefit publishers looking to quickly improve SEO on existing pages and to find low-hanging fruit for new content (discussed previously).

We previously wrote some fundamental tips on improving SEO for existing pages here.

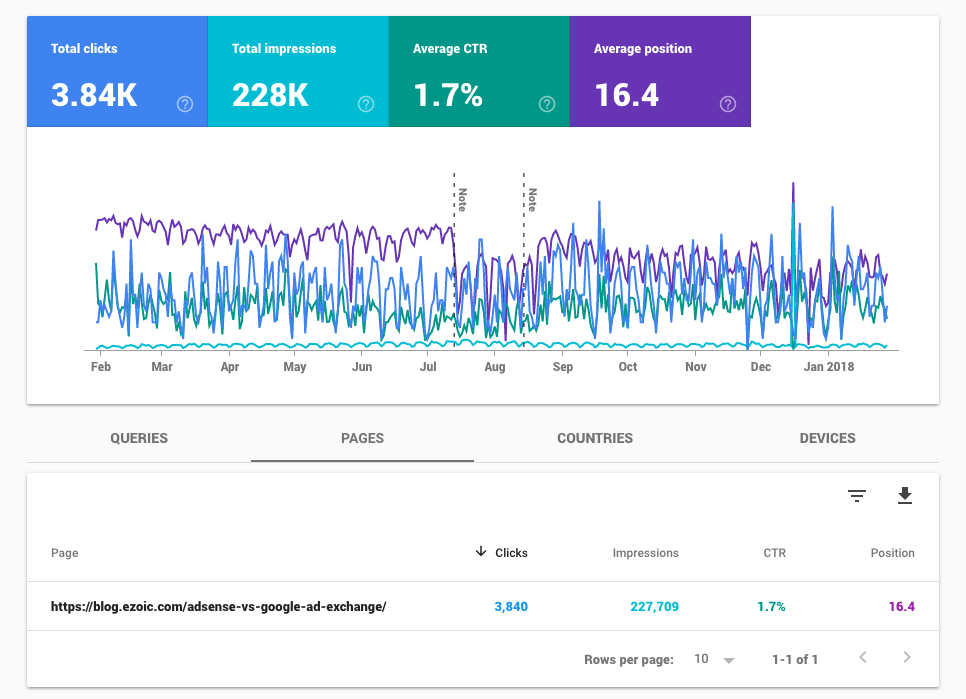

The first piece of new data that publishers will notice inside of Search Console is the ability to see individual search query and individual URL CTR (click-through-rate) data. This means that publishers can now see the CTR that searchers have on their website from specific search queries and on specific pages for results pointing to their website!

To access this CTR data inside of Search Console, go to Status > Performance. Then, deselect all categories except “Average CTR”. Then toggle between pages and queries using the tables below.

We wrote a deep article on how to use this data to hack your way to the top of Google results here. However, now this is easier than ever before because Google is calculating and delivering this data to you… no more spreadsheets or manual calculations.

This data is amazing if you love SEO. Publishers used to actually set up AdWords accounts simply to test the CTR from different <title> tags and <meta> descriptions.

It’s a well-known SEO tip that improving CTR from the SERP (search engine rankings page) offers some ranking and SEO benefits (whether it be directly or indirectly).

You can easily see which queries searchers are clicking on the most; as well as the ones where you may be underperforming. The same goes for your individual pages. You can see which pages are outliers; both positively and negatively.

Which pages are getting the highest CTRs? The lowest? Are the title tags or meta descriptions for these pages written differently? What can you glean from this information about which ones are most/least popular?

Title tags and meta descriptions determine what shows up as a preview to searchers in the SERP, so being able to understand searcher behavior relating to this information can offer a whole host of helpful info that we will dive more deeply into below.

But, before we get there, let’s talk about the second important piece of data for publishers inside of the New Google Search Console.

More historical search data for publishers

In addition to powerful new CTR data available to publishers, Search Console has also massively increased the date range in which publishers can go back and review this data. It used to be that Search Console data could only be viewed for the last 90 days.

This used to be very frustrating for publishers trying to understand the long-term implications of their SEO efforts. Now, publishers can go back for up to one year!

Publishers can even use new filters to see this historical data for web, images, and video now as well. This provides some really great data — separated out — for publishers that may be using a lot of structured data on images or video that may be generating some standalone traffic.

This kind of info can provide help in establishing how SEO changes affect the overall performance of a URL — or the site as a whole — over the long-term. For example, you could look at a page to see how impressions, CTR, and position have changed over the course of the year and throughout multiple Google Algorithm Change Rumors.

You can then go back into your CMS and monitor what changes were made to see if you want to make similar changes on other pages, or change something back if the change you made caused a decrease in impressions or average position.

Being able to see when you made changes — in your CMS — that correlate to improvements in things like average position offers publishers the ability to scale successful changes across their site, or rollback things that caused major issues. This is an example of working smarter with new data.

Testing CTR and using visitor behavior to drive big SEO improvement

Since we now have this huge bevy of exciting new CTR data, and a longer historical record of it than we’ve ever had before, what else can we do with it to improve SEO?

One of the most popular things you’ll start seeing everyone espouse is testing different combinations of title tags and meta descriptions for underperforming pages. This is a great strategy. Simply go into your CMS or HTML and try changing one of those two tags for your page at a time.

If you change them both, you may have too many variables going at once (giving you improper data on which when actually accounted for any changes in the SERP).

But, how do you know what to test?

If you have a page that has a lower CTR than many others, it may be worth taking a look at why it is performing for its queries. A good way to look at this is by loading the SERP and seeing if it stands out — in a good way — vs. some of the competing results.

For example, let’s use my URL above for an article I wrote on AdSense vs. The Google Ad Exchange. In the article, I talk about the differences between AdSense and Google AdX.

Let’s see why CTR is underperforming first by looking at how it compares to other results in the SERP. In the one above, I rank highly and may not want to test anything here. I should check a few other queries to determine if making changes is ultimately worth it.

After I determine what changes I believe will offer the biggest benefit, I can go into my CMS and make changes to either my title tag or meta description for that page. Once I make that change, I should ask Google to re-crawl that page so that the changes are seen in SERPs. That way, I can evaluate my changes faster.

To do this, for the time being, you’ll have to use the old version of Search Console.

Once in the old version, go to Crawl > Fetch As Google. Enter the URL and submit it to the index.

Once you’ve had the URL crawled, its time to wait. I’d give the experiment at least a few weeks to run its course; especially if the page is not a high volume search query page. It the page is getting tens of thousands of clicks via Google search a day, you may be able to get faster answers.

Use the Search Console page reports to see how results have been impacted. In this case, we can see that my CTR experiment appears to be delivering higher average CTR and search position.

I can monitor this over time to determine if this trend holds true; however, I may choose not to experiment further on this page until I know that my improvements have held up long term.

You can mix this with organic landing page traffic data residing in Google Analytics to confirm that this is actually positively impacting traffic. If I see improvements in both data tools, I probably have a winner.

Additionally, I should probably also see how visitor experiences are affected by these changes. I will want to ensure I am not attracting more traffic that is ultimately bouncing or having short sessions due to misleading content. This can affect revenue and long-term SEO.

If there is a conflict between my Google Analytics data and my Google Search Console data, I may want to revisit my experiment. It is feasibly possible that I improve CTR and average search position but negatively impact search traffic to that page (i.e. perhaps I improved for certain search queries but lost position on my highest volume keyword).

This process can be rinsed and repeated for multiple pages.

Using new Google search data to create new content that will rank well

Finding new content ideas that you might be able to rank well for is pretty simple using this new data from Google Search Console. We basically just need to reverse engineer things we’ve done right in the past by revisiting some of this data.

I might want to start by looking at my pages with high average positions, then figure out which of them is actually getting a high volume of traffic. From there, I may want to also explore which of these have high CTR.

Keep track of the subjects, themes, and words used in these queries and pages. This may provide deep knowledge of what topics searchers are really digging from your website.

We wrote a whole blog on this subject here.

Wrapping it all up

This new Google publisher data is really valuable. If you can use it to drive data-driven decision making in your content strategy, I am confident you’ll see positive results in the next 6-8 months.

What do you think? What other cools things could you do with this new data from Google?

Tyler is an award-winning digital marketer, founder of Pubtelligence, CMO of Ezoic, SEO speaker, successful start-up founder, and well-known publishing industry personality.

Featured Content

Checkout this popular and trending content

Ranking In Universal Search Results: Video Is The Secret

See how Flickify can become the ultimate SEO hack for sites missing out on rankings because of a lack of video.

Announcement

Ezoic Edge: The Fastest Way To Load Pages. Period.

Ezoic announces an industry-first edge content delivery network for websites and creators; bringing the fastest pages on the web to Ezoic publishers.

Launch

Ezoic Unveils New Enterprise Program: Empowering Creators to Scale and Succeed

Ezoic recently announced a higher level designed for publishers that have reached that ultimate stage of growth. See what it means for Ezoic users.

Announcement